An Open Specification for Agentic Security Evaluation

In the age of AI, the real game changer is more than the latest LLM, it’s how you put it to work. That’s why we’re open-sourcing the Foundry Security Spec, a battle-tested blueprint for building an agentic security evaluation system. Because the framework is model-agnostic and stack-agnostic, organizations can build a harness that fits their unique environment. In sharing what we’ve learned, our goal is to help the community of defenders move faster and smarter. It enables organizations to shift from noisy alerts to verifiable security findings that drive impact.

The operating model of cybersecurity has fundamentally shifted. As frontier AI models create a new dual-front challenge, attackers are now identifying vulnerabilities at machine speed, leaving security teams struggling to keep pace with manual, legacy processes. At Cisco, we recognize that the old “find and patch” cycle is no longer sufficient to address this new velocity of risk. However, the true potential of these models is realized only when we combine the right harness – the agents and orchestration – with the skilled professionals who drive them. By moving beyond incremental productivity gains to rethink how we find and fix vulnerabilities at scale, we are introducing the Foundry Security Spec as a critical opportunity to empower our teams and help tip the scales in favor of the defenders. This work from Cisco is informed by lessons learned and capabilities developed through advanced security engineering efforts within our internal security team.

Foundry Security Spec is meant to be used with GitHub’s spec-kit, which is an industry-wide set of spec-driven development workflows that can be used with different AI agents.

Foundry is published as two main artifacts, and a set of supporting documents:

- The “spec” artifact — eight core agent roles, five extension roles, the finding lifecycle, the coordination substrate, and roughly 130 functional requirements, each with an inline rationale explaining why it exists.

- The “constitution” artifact — eleven inviolable principles. Every one of them encodes a real production failure we shipped, diagnosed, and fixed.

The Problem Foundry Solves

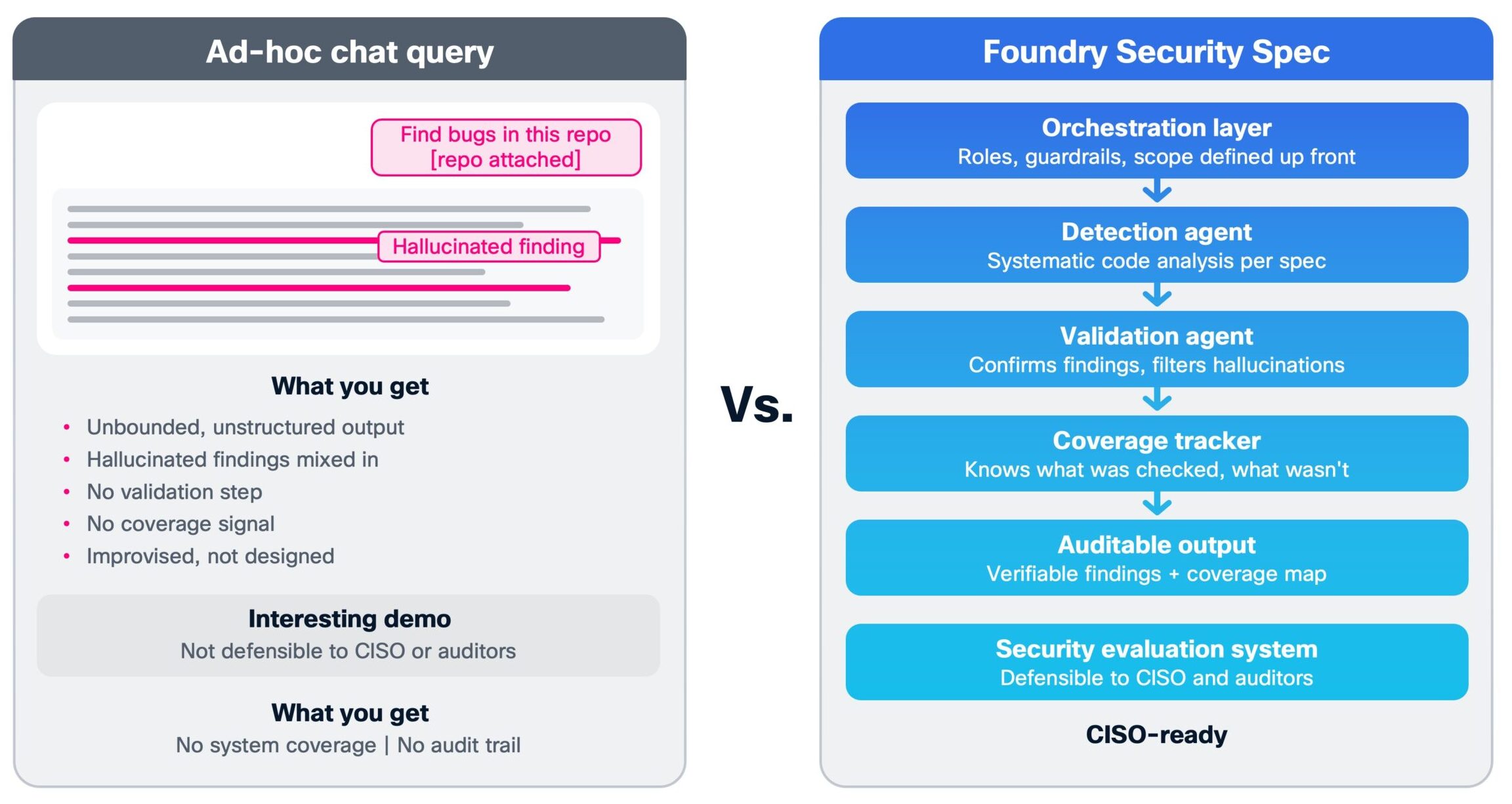

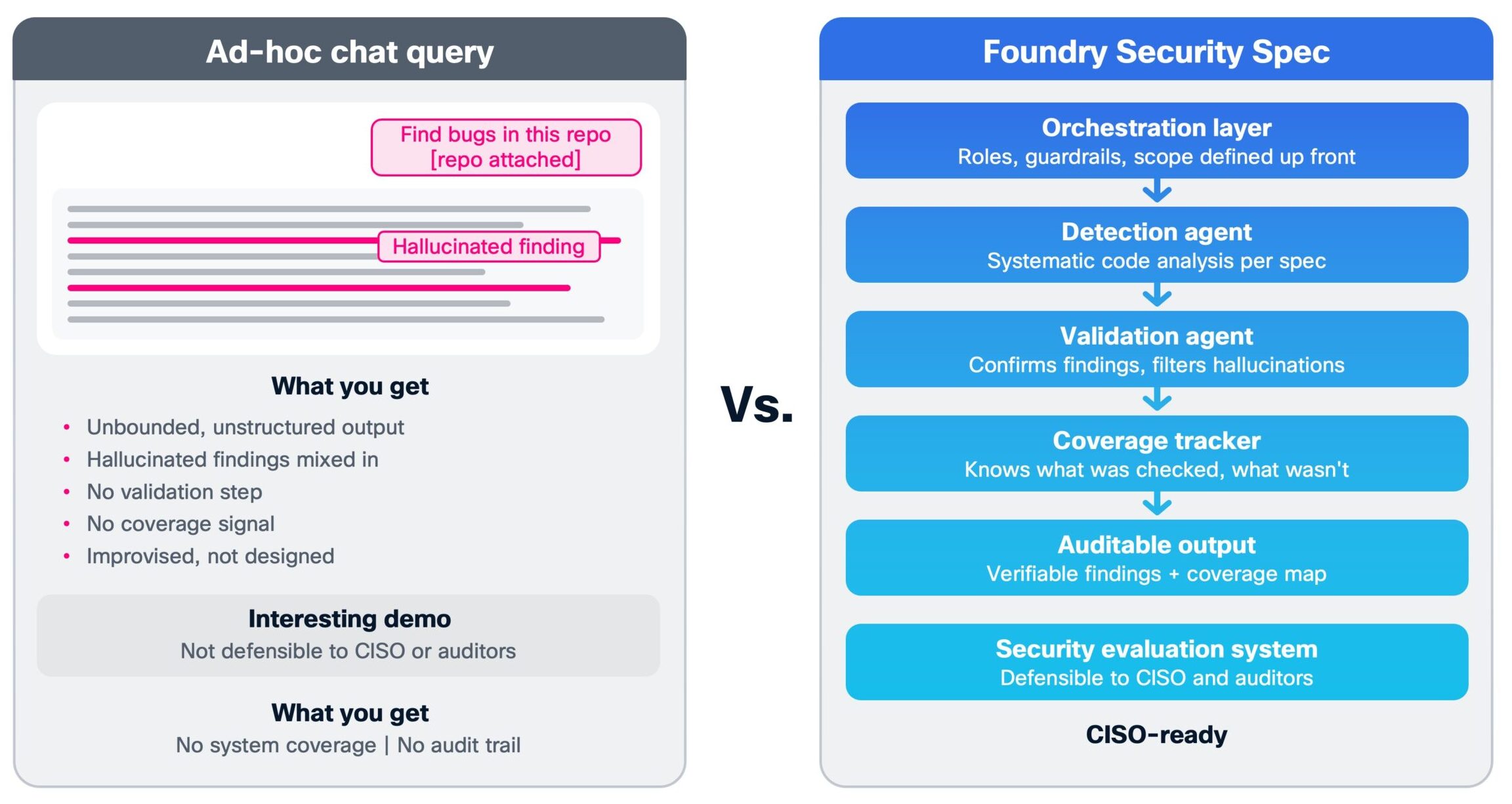

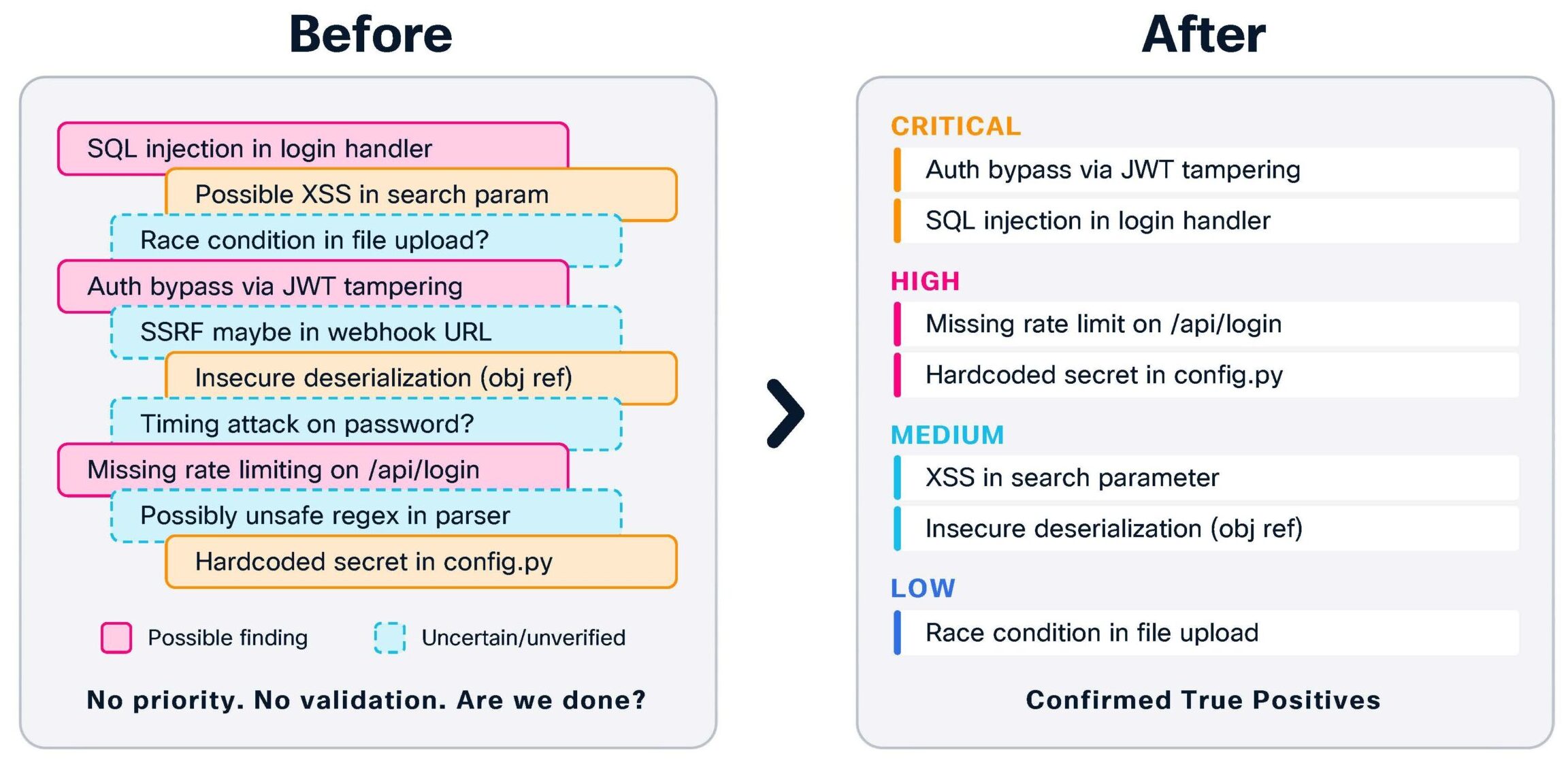

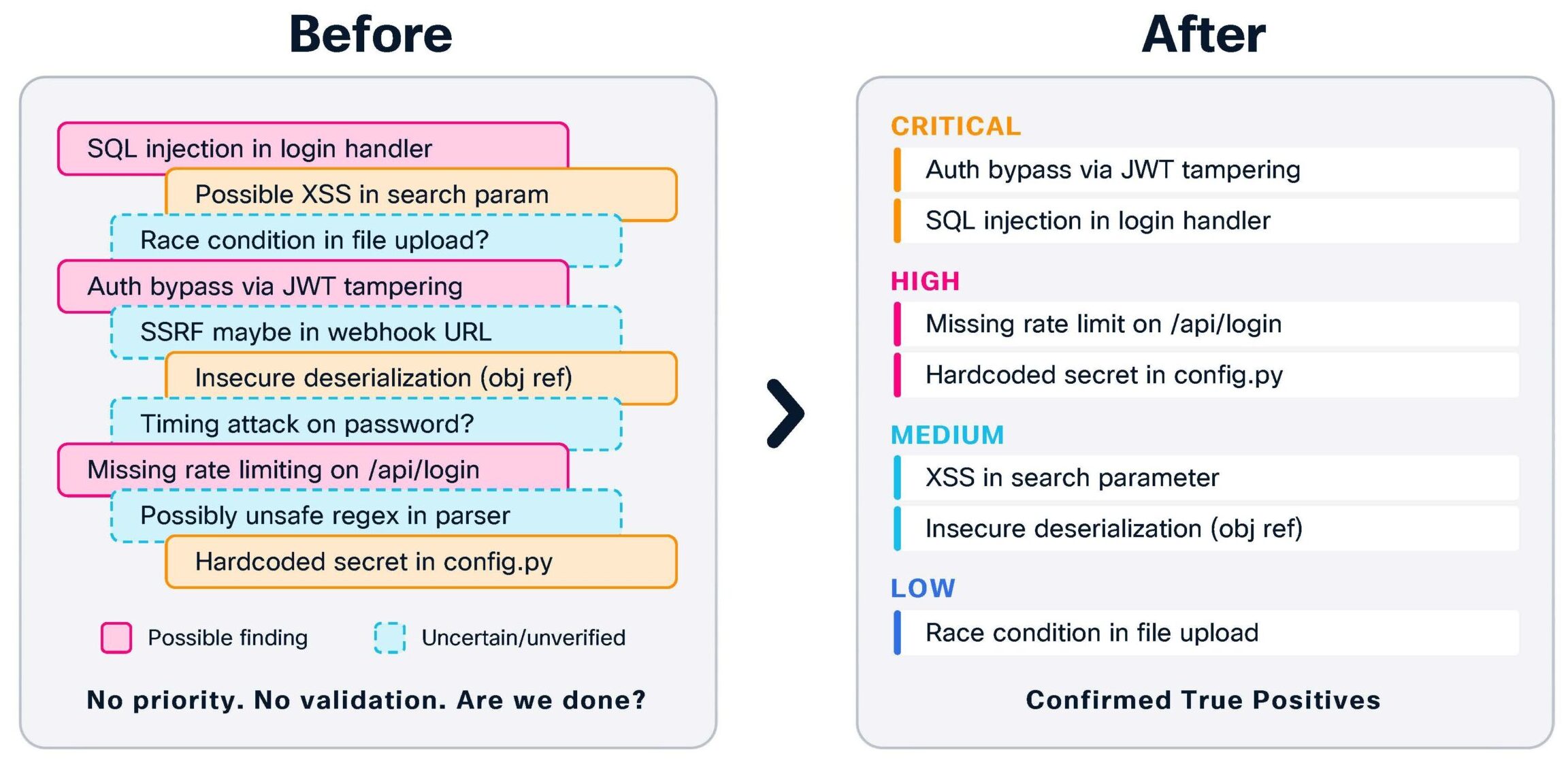

Every security team with access to a frontier LLM has tried the same thing at least once: toss a repo at the model and ask it to “find the bugs.” The result is usually a wall of unbounded, unverifiable output that mixes sharp insights with hallucinated findings, with no way to know what was missed or when you’re actually done. A full agentic system like Foundry Security Spec is the antidote to that chaos: it wraps the model in orchestration, roles, and guardrails so that detection, validation, and coverage are designed up front instead of improvised in a chat window. The difference is stark—one is an interesting demo; the other is a security evaluation system you can defend in front of your CISO and your auditors.

Organizations are investing on AI-assisted security and getting back hallucinated findings, false positives at scale, and no coverage signal. Foundry Security Spec is the scaffolding that turns a frontier LLM from “an interesting demo against your codebase” into a security evaluation system that produces:

- A bounded, prioritized, verifiable set of findings.

- A clear “done” signal and the conjunction of an operator-defined coverage floor and an economic yield threshold.

- An auditable provenance chain from detection through triage, validation, and publication.

- Safety guardrails that assume the model will, at some point, try to do the wrong thing; and constrain it at the substrate, not the prompt.

If you have a frontier LLM and software you are authorized to evaluate, Foundry gives you the shape of the system you need around it.

How Defenders Can Use Foundry Security Spec to Test Their Software

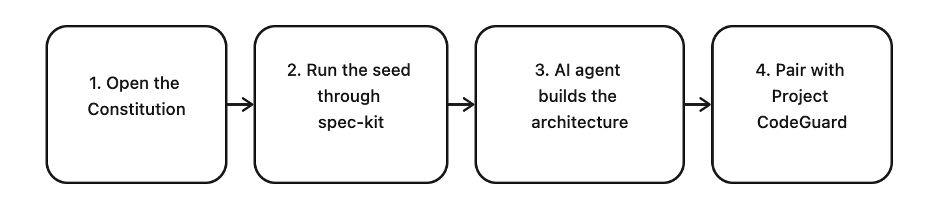

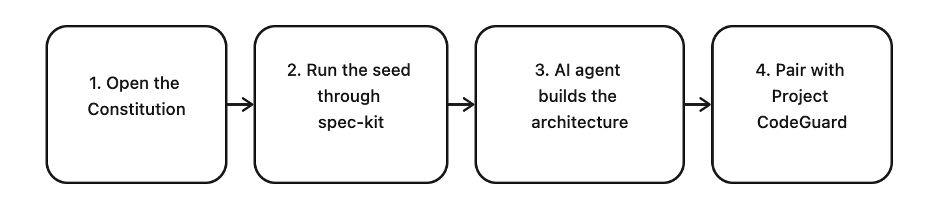

Foundry is designed to be picked up and adapted, not consumed as-is. It is the starting point of your agentic security evaluation journey. The flow looks like this:

- The constitution.md is read by the AI agent (such as Claude Code, Codex, or others) to be used to build the infrastructure. However it is also deliberately written as prose aimed at the human builder and maintainer, with each principle’s “Why this is inviolable” paragraph explaining the specific production failure that rule prevents, so that when an engineer is tempted to weaken a principle for convenience, they encounter the cost of that decision before they make it.

- Run the seed through spec-kit. The specification is written to be consumed by spec-kit. The “seed” refers to the initial, minimal setup that gets your spec‑driven project into a known, ready‑to-work state so AI agents (or developers) can start doing useful work consistently.

- AI agent builds the architecture. The eight core roles (Orchestrator, Indexer, Cartographer, Detector, Triager, Validator, Coverage-Guide, Reporter) each have a defined purpose, defined inputs and outputs, and a list of functional requirements with rationale. You can implement them as subprocess loops, as graph-based pipelines, as serverless functions, as a bespoke harness. The shape is what transfers; the implementation is yours.

- Pair Foundry Security Spec with Project CodeGuard. Foundry Security Spec’s Detector role consumes a corpus of LLM-evaluated detection rules. The rules are from Project CodeGuard, which Cisco open-sourced before Foundry Security Spec existed and donated it to the Coalition for Secure AI (CoSAI). The original purpose of Project CodeGuard is to embed secure-by-default practices into AI coding agent workflows. It provides comprehensive security rules and agent skills that guide AI coding agents to generate more secure code automatically. However, it has also been very useful for code review and for autonomous security evaluations and testing.

The self-improving detection-to-prevention flywheel:

- CodeGuard rules sweep every function in your target: systematic, repeatable, finds what we already know to look for.

- Foundry Security Spec’s exploratory agents hunt alongside: creative, target-specific, finds what no rule yet describes.

- When exploration confirms something the rules missed, Foundry Security Spec records a rule gap.

- The gap is generalized into a new (or revised) CodeGuard rule and lands in the corpus.

- The next sweep (on this target and every future target) catches that whole class on the first pass.

- Because CodeGuard rules are portable, the same corpus loads into an LLM coding assistant as its secure-coding ruleset. The bug class your last evaluation taught the corpus to detect is now prevented at the keystroke, in every developer’s editor, before the next evaluation ever runs.

Every turn of the loop improves detection here and prevention everywhere.

A great starting point

We want to be very explicit about this: Foundry Security Spec is a seed and a blueprint spec. It is not a turnkey scanner or a single tool. It is an example of what a sound AI-powered security evaluation system looks like. Your environment, your threat model, and your goals will reshape parts of it. That is by design. Every place where the seed could either dictate a choice or leave it open, we left it open and explained the trade-off.

Foundry Security Spec is an open-source specification, not a managed service. As with any security tool, the responsibility for implementation, oversight, and final decision-making remains with the user. We provide the blueprint for the guardrails, but it’s up to you to ensure that the ‘human-in-the-loop’ remains the final arbiter of security decisions. We encourage users to treat this as a foundational component of their existing security governance program.

A common question is whether this spec will become obsolete as LLMs evolve. The answer is it was designed not to be. Foundry Security Spec is built on functional requirements and roles, not specific model parameters. Whether you are using today’s frontier models or the more complex reasoning agents of tomorrow, the need for an orchestrator, a detector, and a validator will remain constant. The spec is designed to be the stable harness that keeps your security evaluation consistent, regardless of the ‘engine’ under the hood.

Why a specification and not the source?

Our internal implementations are tightly bound to Cisco infrastructure: our LLM gateway, our issue tracker, our private cloud, etc. Open sourcing that code would give defenders something that runs in exactly one environment. It would not transfer.

What transfers is the design: which roles you need and why, what each must guarantee, how findings flow from detection to publication, what “done” means for an evaluation, where the quality gates go, and which shortcuts will hurt you six months in. That design is model agnostic and infrastructure-neutral.

A genuine contribution to the community

We do not say this lightly: we believe this is one of the most substantive specifications that can help defenders test their environment and software. It is what security teams trying to use a frontier LLM responsibly are currently trying to invent on their own.

It pairs with CodeGuard to form a real, running flywheel between detection (Foundry Security Spec) and prevention (CodeGuard against skills in your developer’s coding agent). Every adoption strengthens the corpus. Every corpus update raises the floor for everyone.

The security of our global digital infrastructure is a collective effort. We invite you to explore the Foundry Security Spec on GitHub, join the conversation in our community forums, and begin building your own agentic security evaluation system. Visit our repository at to get started today.

Build on it. Adapt it. Contribute to it.